A metadata and observability framework with Fractal and OpenAI

Trustworthy AI depends not only on model performance, but on the metadata that captures how systems are built, deployed, monitored, and governed. This article presents a four-layer metadata framework for creating that evidence across the AI lifecycle. It highlights how OpenAI tooling provides the audit, tracing, and observability signals needed for stronger enterprise governance, and how Fractal's expertise turns those raw logs and signals into a usable, scalable governance system. The goal: trustworthy AI through evidence, control, and accountability, not model capability alone.

Introduction

Trustworthy AI is not solely a function of model quality; it is a function of evidence. In the era of Generative and Agentic AI, the line between an impressive prototype and a defensible deployment is drawn by the metadata captured and operationalized.

Without it, organizations default to "instant denial," deflecting responsibility for failures onto the technology rather than the governance gaps that caused them. With it, leaders can demonstrate where data originated, how models were trained and evaluated, how decisions were made, and how risks were mitigated. Policy becomes proof.

Regulation has formalized this reality. The EU AI Act, for example, requires sufficient technical documentation (Article 11), mandates robust risk management systems (Article 9), governs training and validation datasets (Article 10), calls for operational transparency (Article 13), and requires automatic recording of events for high-risk systems (Articles 15 and 17). These provisions reflect a broader shift toward documentation, traceability, and risk controls in enterprise AI.

The challenge is no longer whether to manage metadata, but how to do so comprehensively, while sustaining the agility that enterprise AI demands.

A four-layer metadata framework

Effective AI governance requires a structured approach to metadata management. The framework below gives data leaders and governance professionals a comprehensive blueprint for documenting AI systems throughout their lifecycle. EU AI Act references are included as illustrative examples of how metadata supports emerging regulatory expectations, not as legal guidance.

Four-Layer Metadata Framework for Trustworthy AI

Governance evidence across the full AI lifecycle

Layer 1 — Data provenance

The foundation of AI governance is knowing exactly where data came from and how it was handled. This layer documents sources, ownership, and licensing; consent and collection methods; preprocessing pipelines, transformations, and quality checks; and usage restrictions, privacy rules, retention policies, and localization controls.

Regulatory alignment: EU AI Act Article 10 on dataset governance and quality.

Layer 2 — Model development

Accountability for what was built and how it performs. This layer captures architectures, hyperparameters, training runs, and compute environments; test sets, metrics, and robustness or adversarial evaluations; and fairness assessments, bias mitigation approaches, and version lineage.

Regulatory alignment: EU AI Act Articles 11 and 15 on technical documentation and event recording.

Layer 3 — Deployment context

Governance does not stop at model training. This layer documents intended use and limitations, decision thresholds and fallback paths, human oversight design including escalation triggers and authority frameworks, integrations and dependencies, monitoring SLIs/SLOs, feedback loops, and for agentic systems, execution-environment metadata such as harness/sandbox separation, mounted data sources, exposed ports, and resumable session state.

Regulatory alignment: EU AI Act Article 13 on transparency and appropriate use.

Layer 4 — Governance process

The operational discipline that keeps AI systems accountable over time. This layer tracks approval workflows, accountable owners, and separation of duties; risk assessments, mitigations, and incident response playbooks; and compliance checks, legal holds, review cadences, and retirement criteria.

Regulatory alignment: EU AI Act Article 9 on risk management systems.

How OpenAI products map to the trust stack

OpenAI provides several first-party surfaces that feed directly into this metadata framework. They are best understood as telemetry and control-plane inputs, not as a complete enterprise system of record. Fractal normalizes and governs these signals into auditable evidence with lineage, ownership, policy mappings, retention controls, and audit-ready reporting.

Compliance, auditability, and retention

For ChatGPT Enterprise workspaces, the OpenAI Compliance Platform provides access to workspace logs and metadata that organizations can integrate with eDiscovery, DLP, SIEM, and data lake tooling. The design question is not only whether logs exist, but also whether they are exported continuously, normalized consistently, joined with identity and policy context, and retained in accordance with the customer's own governance requirements.

The Compliance Platform supports two complementary patterns: immutable, append-only log events for audit use cases, and state and query access for joining data and supporting legacy audit needs. Because log schemas, event types, and access patterns can evolve, implementation plans should validate the current route, event schema, and export requirements before publication or production rollout.

For API Platform organizations, the Admin API enables programmatic management of organization and project administration surfaces, while the Audit Logs API provides an immutable, auditable event stream for security, compliance, and operational review. This is most valuable when mapped into enterprise IAM, CMDB, ticketing, SIEM, and GRC systems so each administrative event can be tied back to ownership, approval, and risk posture.

Retention and deletion behavior vary by product surface, customer configuration, and export pattern. The Compliance Logs Platform retains log data for a limited period unless customers continuously export and retain those logs in accordance with their own policies. Teams should validate current retention windows, logging behavior, deletion semantics, and administrative controls in the latest OpenAI documentation and contract terms before making governance commitments.

OpenAI states that ChatGPT Enterprise has successfully completed a SOC 2 Type 2 audit. Supporting security, privacy, and compliance documentation is available through the OpenAI Trust Portal. OpenAI also states that its business data protection practices for the API Platform align with SOC 2 Type 2 Trust Services Criteria.

Tracing, grading, and observability for Agentic AI

The OpenAI Agents SDK includes built-in tracing in the normal server-side SDK path. A trace captures a structured record of model calls, tool calls, outputs, handoffs, guardrails, and custom spans, all of which are inspectable in the Traces dashboard. Trace grading then lets teams score those traces against structured criteria to identify regressions, workflow-level failure modes, tool-selection errors, handoff issues, or policy violations at scale.

For governance teams, this matters because agent behavior becomes inspectable as a sequence of attributable decisions rather than an opaque final answer.

For workflows that require stateful, long-running execution, Sandbox Agents in the Python Agents SDK provide a container-based, Unix-like execution environment with files, commands, packages, mounted data, exposed ports, snapshots, and resumable state. The architectural distinction is important: the harness is the control plane, owning orchestration, tool routing, approvals, tracing, recovery, and run state. The sandbox is the execution plane, where model-directed work manipulates files, runs commands, installs dependencies, serves previews, and snapshots workspace state. Governance metadata should document both planes without unnecessarily retaining sensitive runtime content.

Transparency and safety artifacts

OpenAI publishes model-behavior guidance through the OpenAI Model Spec and shares public safety materials through the Deployment Safety Hub, where teams can review system cards, evaluation summaries, and documentation on measured risks, safeguards, and mitigations used in deployed systems. These artifacts should be referenced as supporting evidence and design inputs; enterprise-specific controls still need to be mapped to the organization's own risk taxonomy, use-case tiering, and approval process.

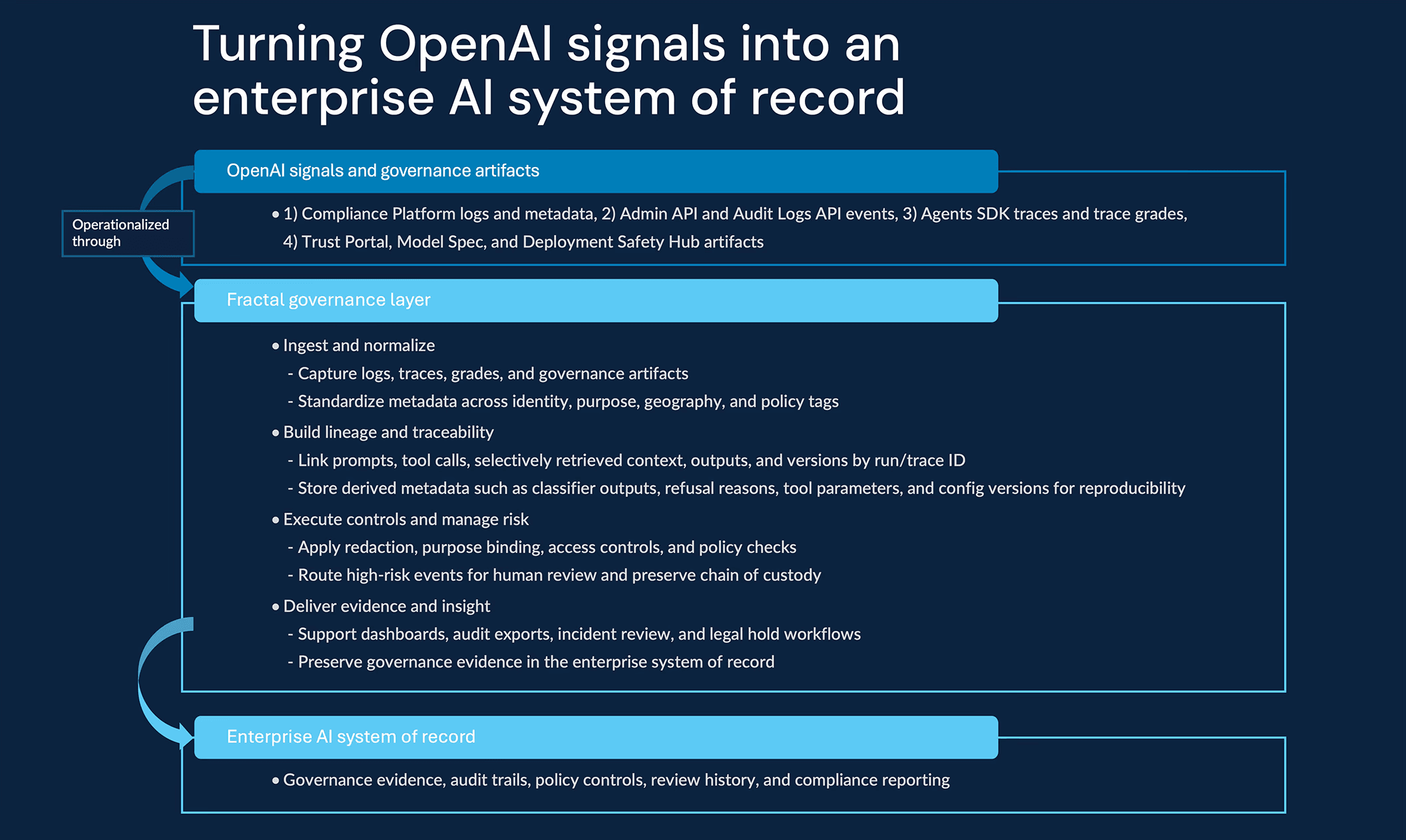

From signals to a system of record

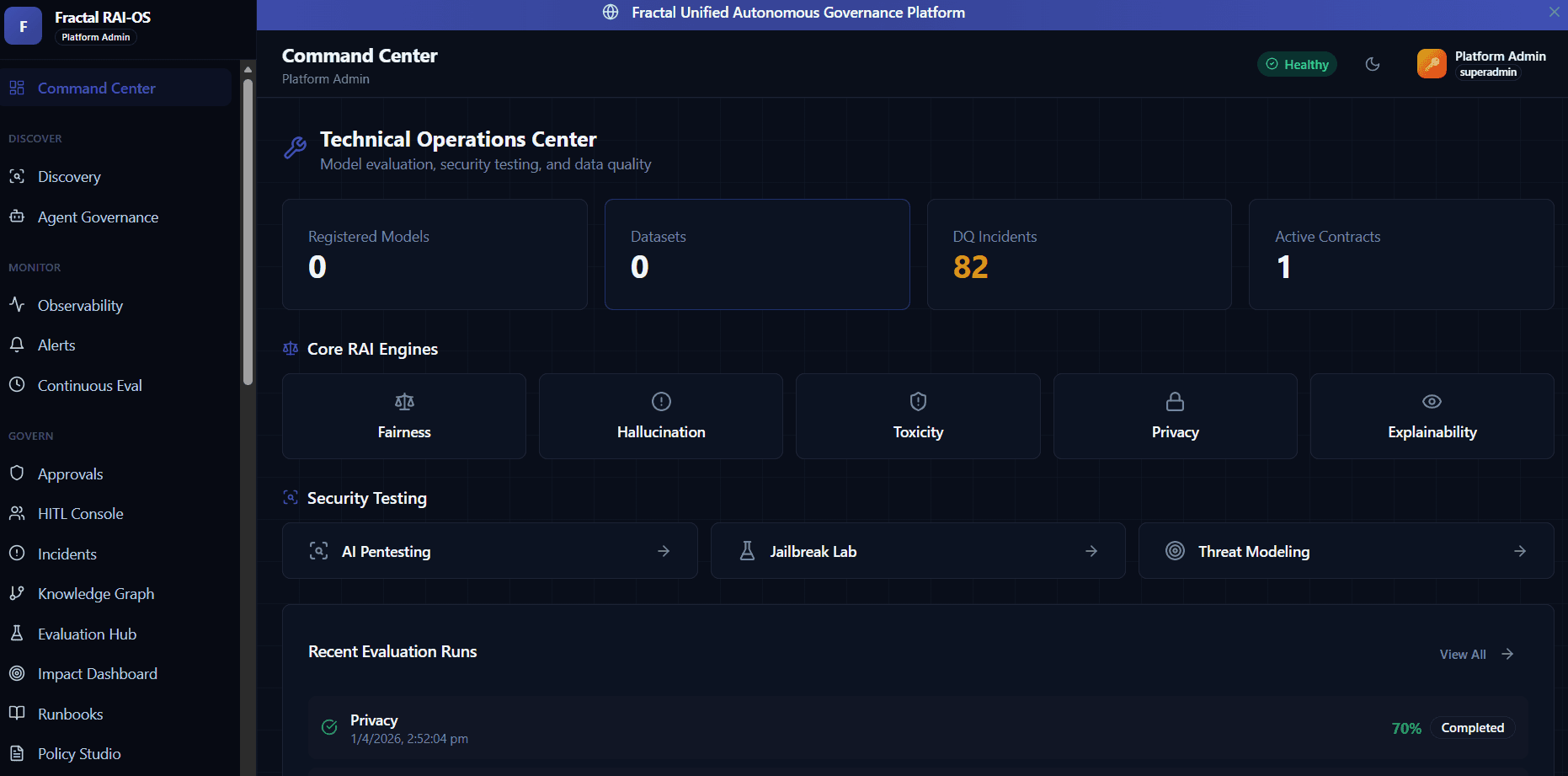

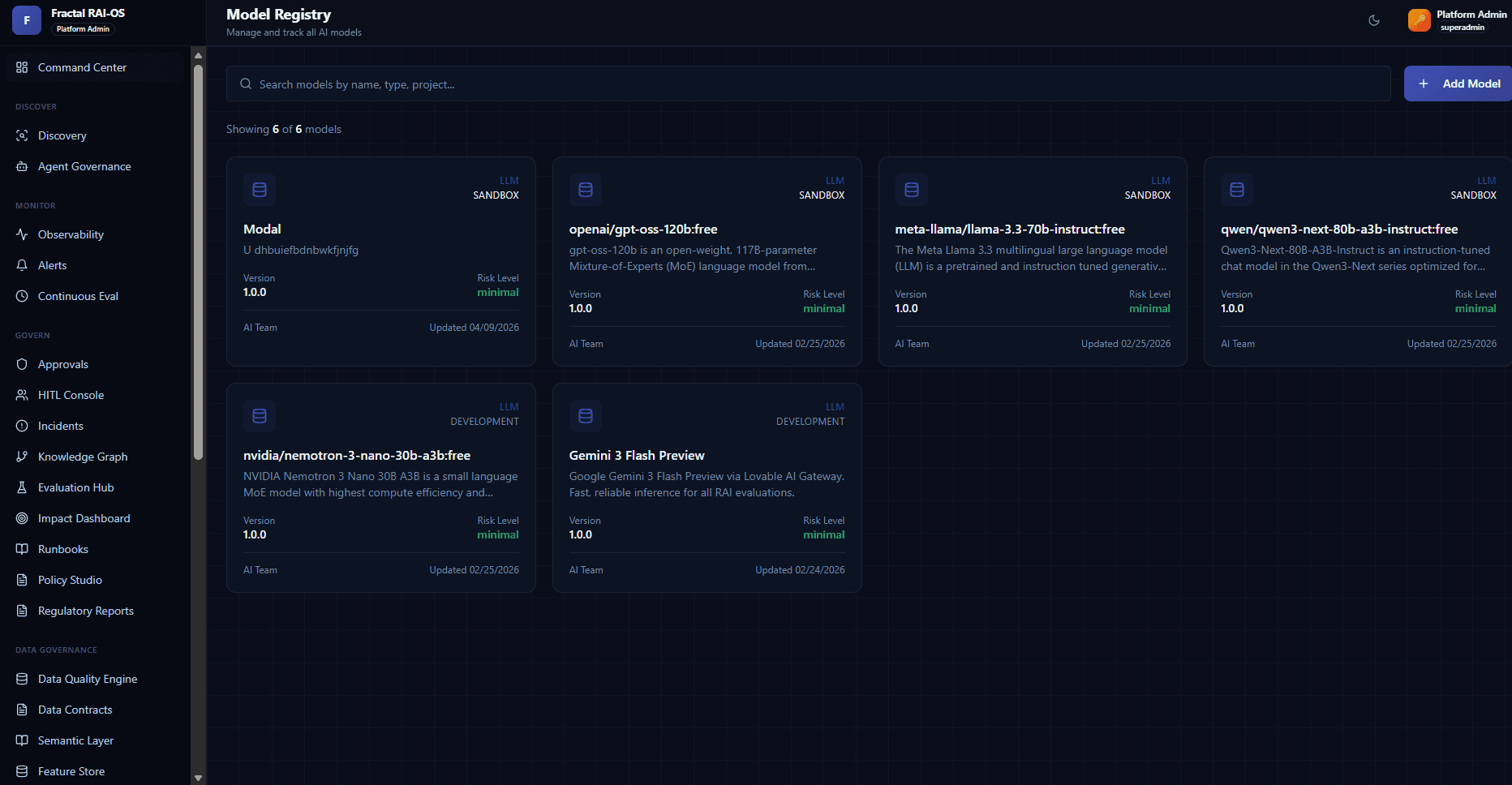

OpenAI supplies high-fidelity operational signals, compliance logs, platform audit events, traces, grades, and supporting safety artifacts. Fractal's governance layer turns those signals into an enterprise evidence graph by linking prompt and workflow metadata, model and tool versions, dataset and policy lineage, human approvals, incident records, retention policies, and audit controls.

The value is in preserving the minimum evidence needed to reconstruct what happened, why it happened, who owned it, and which control was applied. Audit readiness becomes a by-product of production operations, not a manual reconstruction exercise performed under pressure.

In practice, this creates a governed operating layer: lineage tied to owners and versions, policies connected to evidence, exceptions routed to accountable teams, and every signal in a format that a GRC team can actually use.

KPIs that signal trustworthy AI

For AI professionals and governance leaders, clear metrics are the difference between a governance framework that exists on paper and one that actually works. The following KPIs provide quantifiable evidence of AI trustworthiness in production:

Key considerations and caveats

Enterprises moving from AI experimentation to scaled deployment should plan for the following:

Validate vendor claims and configure policies: Confirm current retention windows, log schemas, export routes, product eligibility, certifications, and contractual controls via OpenAI documentation, the OpenAI Trust Portal, and customer-specific agreements.

Privacy by design: Minimize data sharing, apply redaction and data minimization, and segregate high-risk workloads and sensitive prompts from the outset.

Stateful agent boundaries: For long-running workflows, distinguish usage control-plane state, sandbox execution-plane state, exported compliance logs, and durable enterprise records — so observability and debugging do not require retaining more sensitive content than necessary.

Defense in depth: Combine OpenAI telemetry with enterprise DLP, SIEM, and policy enforcement to avoid single-point-of-failure governance.

Cross-Border and Localization: Track geography in metadata and route and retain data according to regional rules and contractual obligations.

Conclusion

Metadata is the control plane for trustworthy AI, transforming black-box behavior into observable, auditable, and governable systems. OpenAI surfaces such as the Compliance Platform, Admin API, Audit Logs API, Agents SDK tracing, trace grading, and Sandbox Agents provide operational evidence across workspace activity, platform administration, and agentic execution. Resources such as the OpenAI Trust Portal, Model Spec, and Deployment Safety Hub provide documentation on security, behavior, and deployment safety.

Fractal helps enterprises translate these signals and artifacts into practical governance capabilities across lineage, policy enforcement, audit readiness, risk controls, and production operations.

Together, the goal is assurance rather than aspiration: AI systems that are transparent, accountable, and compliance-ready by design.

Continue the enterprise AI maturity journey

Trustworthy AI does not stop at governance. Once organizations have the metadata, traces, and evidence layer in place, the next question is how to design AI systems that use context effectively, reliably, and responsibly.

In our blog on Context Engineering with OpenAI, we explore how enterprises can structure the right context, instructions, tools, memory, and evaluation loops to improve the quality and reliability of AI systems in production.

References

Authors

Shikhar Kwatra

AMER Partner ADE Engineering Lead, OpenAI

Subeer Sehgal

Principal Consultant – Cloud & Data Tech, Fractal

Sonal Sudeep

Principal Consultant – Cloud & Data Tech, Fractal

Arushi Bafna

Lead AI Engineer – AI Client Services, Fractal

Suvam Ray

Lead AI Engineer – AI Client Services, Fractal