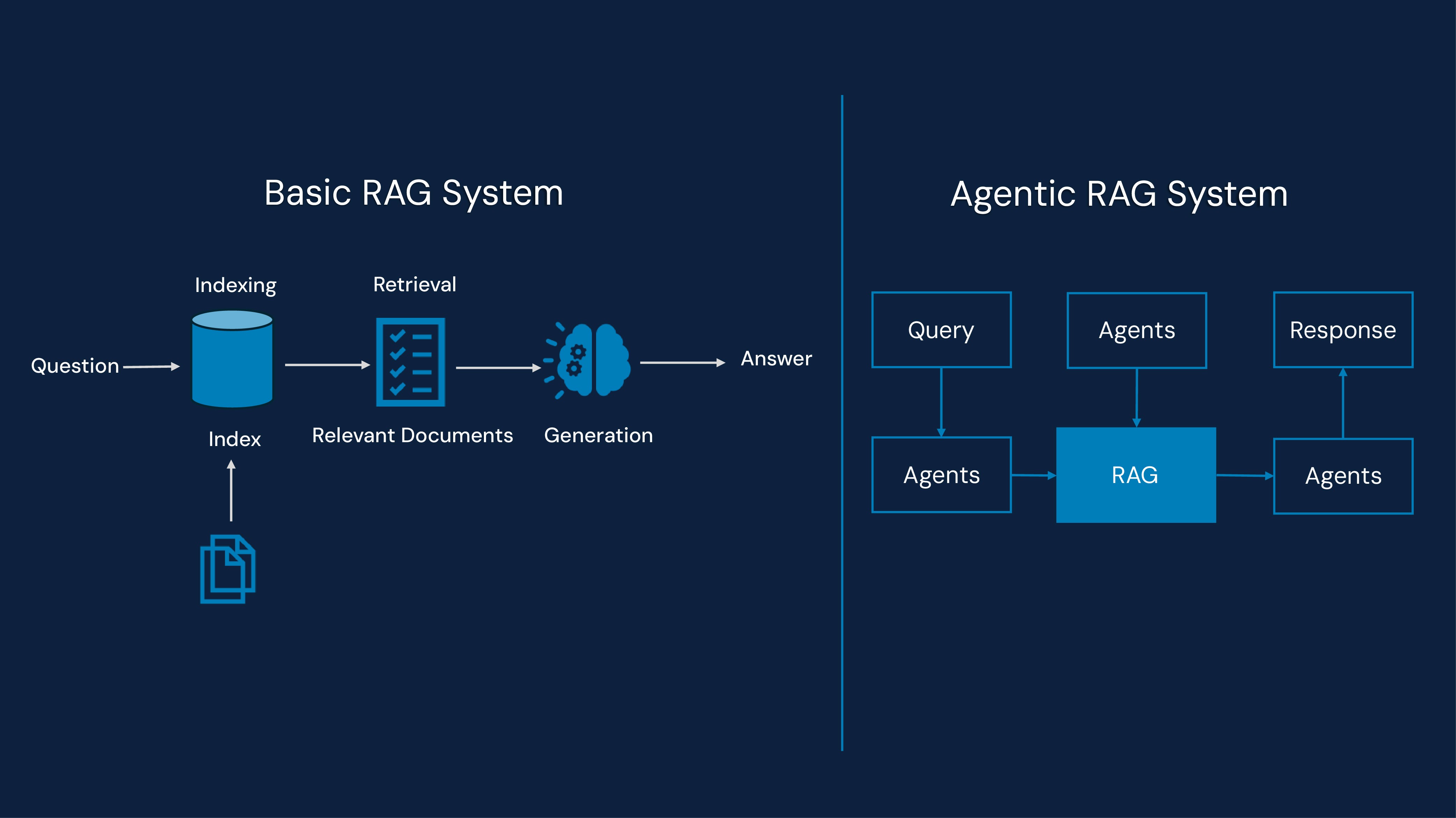

As AI systems become increasingly sophisticated, the methodologies by which they retrieve and generate information are also evolving. Two prominent frameworks, Retrieval-Augmented Generation (RAG) and Agentic RAG, offer distinct approaches to augmenting large language models (LLMs) with external knowledge within modern enterprise AI systems.

Retrieval-Augmented Generation (RAG) is a framework that enhances text generation models by integrating an external knowledge retrieval system. Rather than relying solely on pre-existing training data, RAG dynamically retrieves pertinent information from databases, APIs, or knowledge repositories to enhance the output of the LLM. This architecture is widely adopted as an LLM grounding technique to improve factual reliability and reduce hallucinations in domain-specific LLM applications.

Key components of RAG

Retrieval Mechanism – Searches for relevant information from an external knowledge base, supporting scalable knowledge retrieval systems.

Language Model (LLM) – Uses the retrieved data to generate contextually rich responses.

Fusion Layer – Integrates retrieved information into the generated response for improved LLM accuracy optimization.

How does RAG work?

The user submits a query.

The retrieval module searches for relevant documents from an external source.

The retrieved documents are fed into the language model, enriching the generated response.

The final response is produced using both retrieved knowledge and the model’s pre-existing understanding, strengthening overall enterprise search optimization.

Benefits of RAG

Improved factual accuracy compared to standalone LLMs.

Access to real-time data for more up-to-date responses.

Reduced hallucination risk by grounding responses in external knowledge.

Supports scalable enterprise AI transformation initiatives by enabling secure knowledge access.

Limitations of RAG

Limited query optimization – The retrieval module may fetch irrelevant or redundant information.

Lack of autonomous refinement – RAG does not adapt queries dynamically.

No active verification – The retrieved information is used as-is without further validation.

Limited support for advanced AI workflow automation requiring multi-step reasoning.

Choosing the right retrieval framework for your AI

Aspect | RAG | Agentic AI |

Static, single-pass retrieval using predefined queries. | Multi-step, autonomous retrieval with dynamic query refinement powered by autonomous AI agents. | |

Query optimization | No real-time refinement; limited handling of ambiguity. | Actively refines queries based on intent gaps and context for improved goal-driven AI systems. |

Information verification | No verification: retrieved data fed directly into LLM. | Cross-checks sources; filters low-quality or conflicting info to improve LLM accuracy optimization. |

Self-learning and adaptability | No learning from the user interactions; fixed retrieval path. | Learns from feedback; adapts via reinforcement learning (RLHF), enabling intelligent process automation. |

Response accuracy and context | May lack nuance or depth due to static retrieval. | Builds context before answering; discards irrelevant or redundant info to support scalable AI infrastructure. |

Computational cost and latency | Fast and lightweight; optimized for speed. | Higher processing load. Optimized for precision and reliability in complex enterprise automation platforms. |

Use case suitability | Best for basic tasks like customer support and general-purpose assistants. | Well-suited for high-stakes domains like legal, financial, medical, and enterprise AI, requiring advanced AI workflow automation. |

RAG vs. Agentic RAG: Top use cases

Industry | RAG (Retrieval- Augmented Generation) | Agentic RAG |

Customer support | Enhances chatbots with accurate, document-grounded responses from FAQs, manuals, and help centers. | Adapts responses based on user history, context, and multi-turn reasoning for complex issue resolution using AI workflow automation. |

Healthcare | Summarizes medical literature and retrieves clinical guidelines for faster decision support. | Synthesizes patient records, research, and treatment plans, verifying and applying adaptive reasoning within enterprise AI systems. |

Finance and compliance | Retrieves policy documents and regulatory updates for quick reference. | Performs multi-step analysis of transactions, flags anomalies, and verifies compliance autonomously through goal-driven AI systems. |

Enterprise knowledge | Pulls internal documentation from SharePoint, Notion, or Slack to answer employee queries. | Routes, verifies, and summarizes internal documents with context-aware filtering and goal-driven planning across enterprise automation platforms. |

Education | Delivers personalized answers from course materials and academic databases. | Builds adaptive learning paths, refines queries, and generates tailored study plans using autonomous AI agents. |

Answers product-related questions using inventory, order history, and support docs. | Diagnoses customer issues, initiates returns, and updates records through multi-agent workflows supporting intelligent process automation. | |

Scientific research | Retrieves relevant papers and data for literature reviews. | Plans multi-hop research queries, compares findings, and generates structured summaries via scalable AI infrastructure. |

Business intelligence | Generates reports from structured data and dashboards. | Automates KPI analysis, trend detection, and decision support using external APIs and reasoning agents to accelerate enterprise AI transformation. |

Conclusion

Understanding the dynamic between RAG (Retrieval-Augmented Generation) and agentic AI underscores how these technologies complement each other. RAG specializes in grounding responses with pertinent, real-world data as a reliable LLM grounding technique, whereas Agentic AI facilitates autonomous decision-making and proactive problem-solving through autonomous AI agents.

Collectively, they herald the development of more intelligent, dependable, and context-aware AI systems, accelerating enterprise AI transformation and reshaping our interactions with information, automation, and AI workflow automation in daily life.