Reimagining complex query intelligence at scale

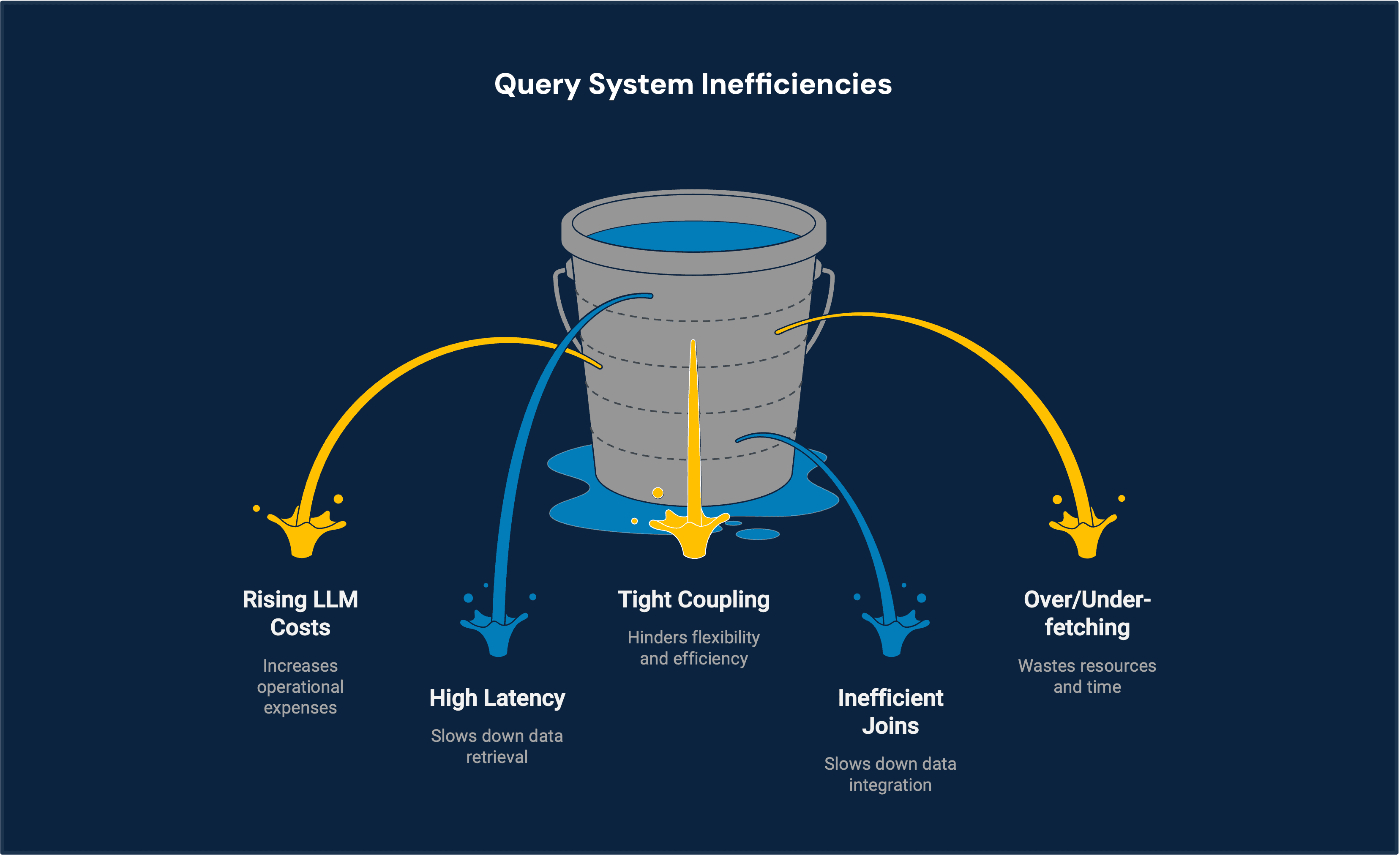

Traditional query systems struggle with large-scale, complex data operations involving pagination, aggregation, and multi-source joins. Tight coupling of query parsing and execution leads to inefficiencies like over-fetching, high latency, and rising LLM costs, limiting scalability and flexibility in evolving data environments.

Key challenges

Rising LLM inference costs

High latency with large datasets

Tight coupling of query components

Inefficient multi-source data joins

Over-fetching and under-fetching of data

The solution

Intelligent orchestration

LLM intent detection

Selective tool activation

Reduced unnecessary calls

Dynamic execution decisions

Scalable execution

Multi-source joins

Server-side computation

Streaming-first delivery

Pagination + aggregation engine

1

Smart design

Modular

Understand intent

No rigid integrations

Handle aggregation/pagination

2

Efficient processing

Guided workflows

Conditional logic

Optimized and scalable

Real-time structured results

Performance gains

Lower latency

Real-time feedback

Faster query execution

Cost efficiency

Reduced LLM usage

Optimized compute load

Minimal redundant calls

Scalability

Handles large datasets

Multi-source flexibility

Adapts to query complexity

Engineering productivity

Reusable components

Simplified maintenance

Faster development cycles

User experience

Streaming responses

Incremental insights

Better responsiveness