/

Whitepapers

/

Shift left for AI: Turning data products into the control layer for reliable AI decisions

Shift left for AI: Turning data products into the control layer for reliable AI decisions

Authors

Subeer Sehgal

Principal Consultant, Cloud & Data Tech

Arushi Bafna

Engagement Manager, Cloud & Data Tech

Anjali Rawat

Senior Consultant, Cloud & Data Tech

Abhishek Shahji

Engagement Manager, Cloud & Data Tech

Not just strength, AI’s vulnerability also begins with data

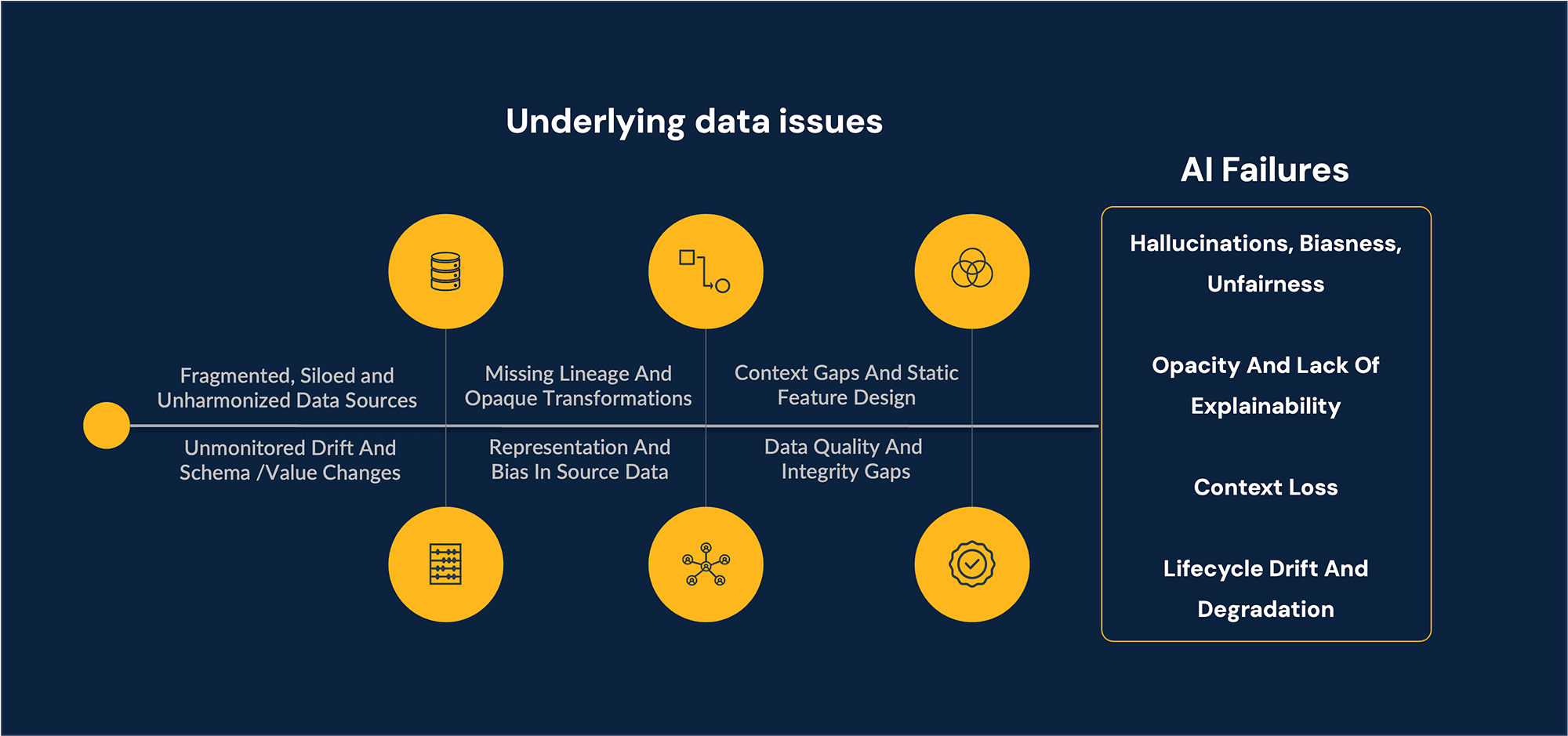

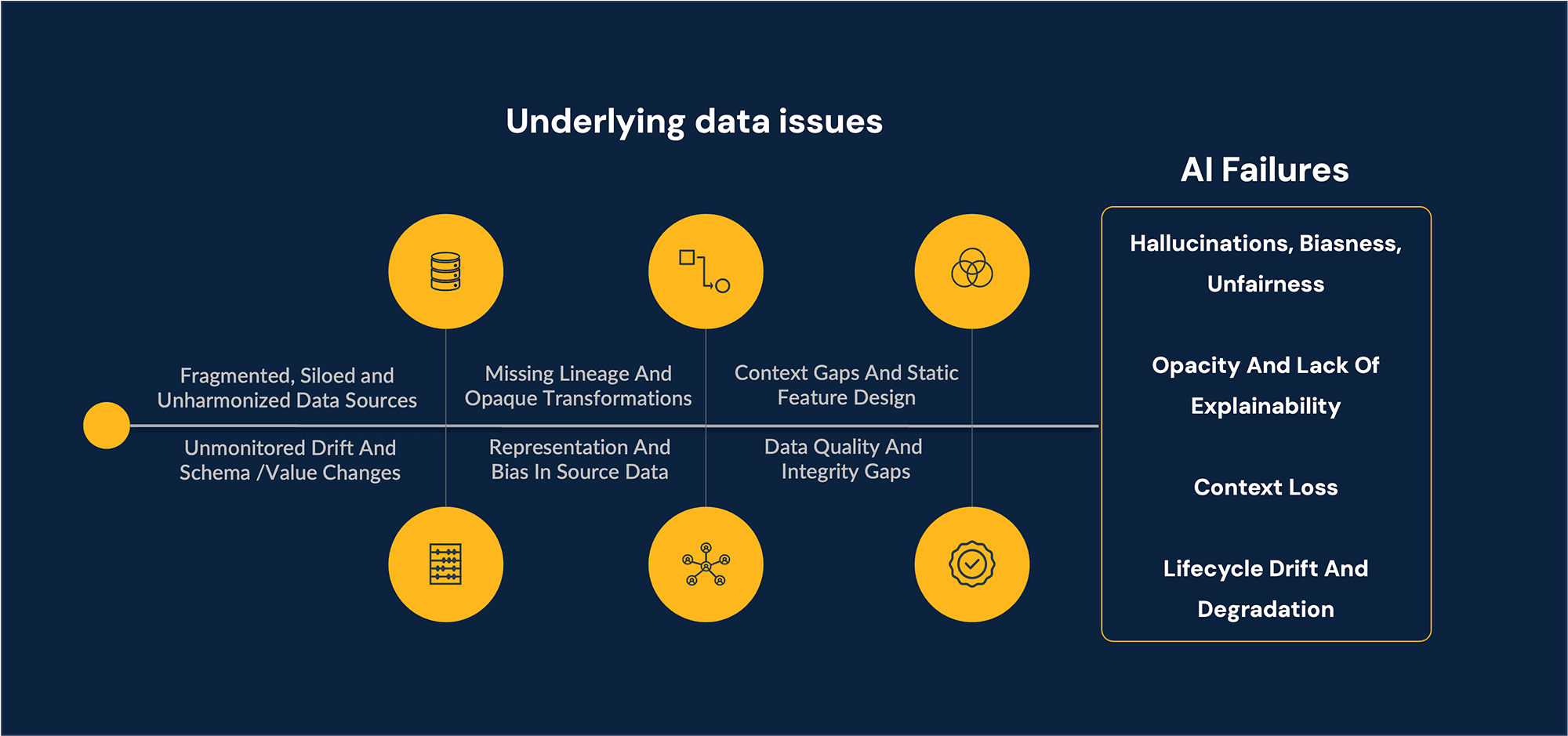

Enterprises are rapidly embedding AI into operational decisions - loan approvals, claims triage, diagnosis assistance, collections of treatment, and more - yet many still rely on brittle pipelines and opaque models with limited oversight. But behind these systems lies a fragile truth: AI fails more often because of bad data than bad algorithms. AI systems increasingly influence outcomes that carry financial, ethical, and societal consequences.

These failure modes are the predictable consequences of upstream data weaknesses. The implication for governance is clear: if we want reliable, fair, and explainable AI at scale, we must move the control point closer to the source.

Shift-left to mitigate hidden AI flaws/failures

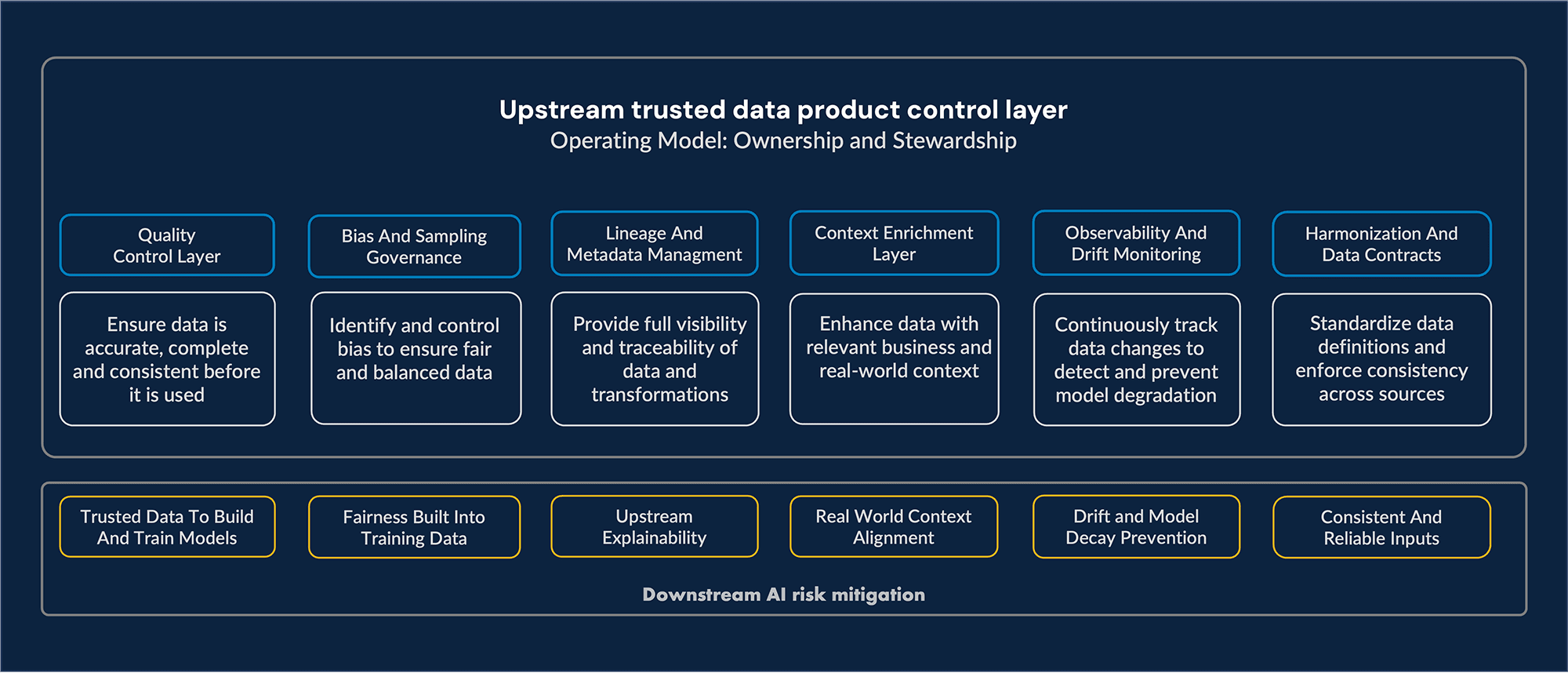

Shift Left is the commitment to move risk controls upstream because most AI risks originate long before a model is even trained. When biased samples, missing context, opaque transformations, or silent drift slip into the pipeline, the model will amplify them on a scale.

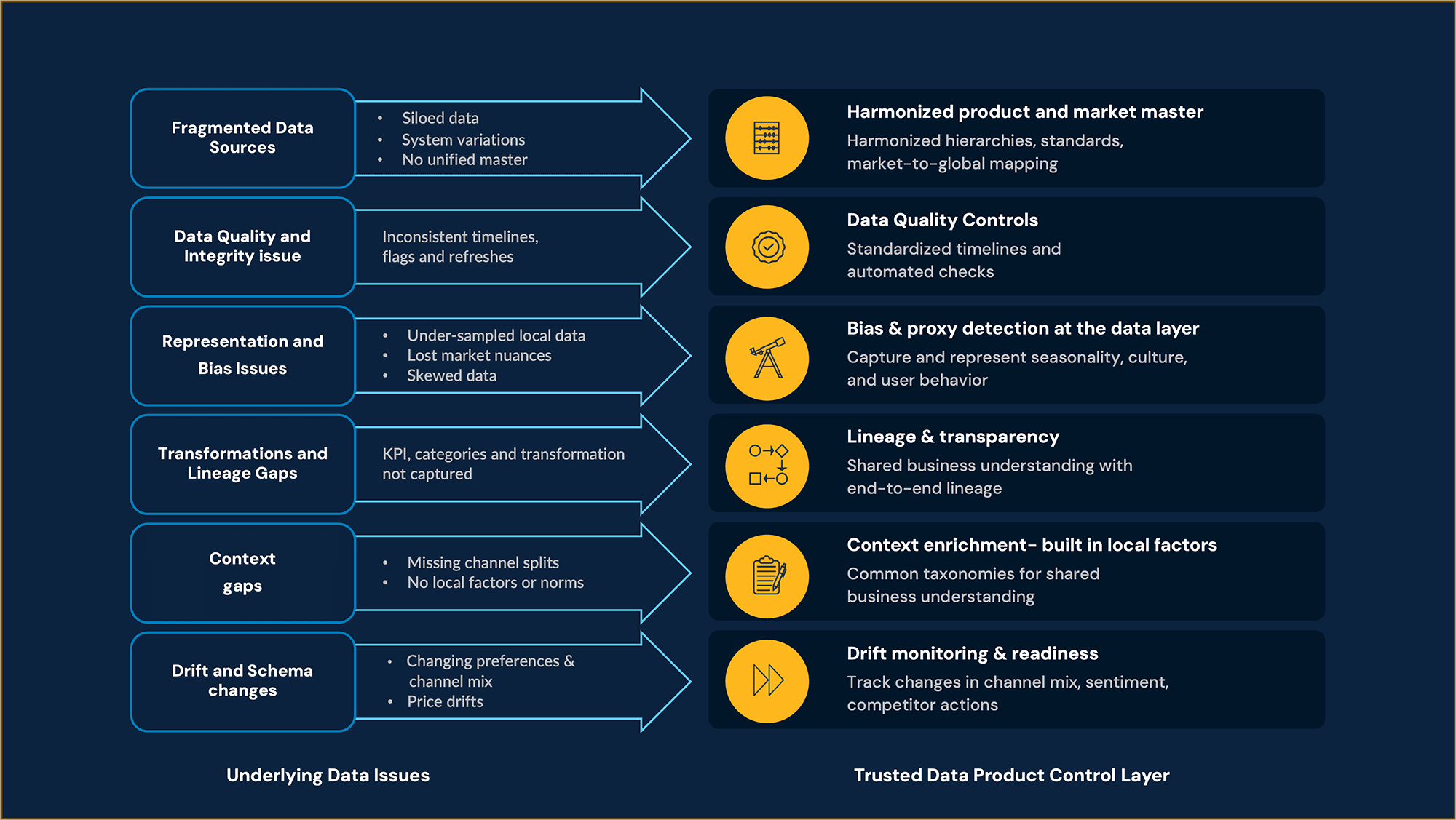

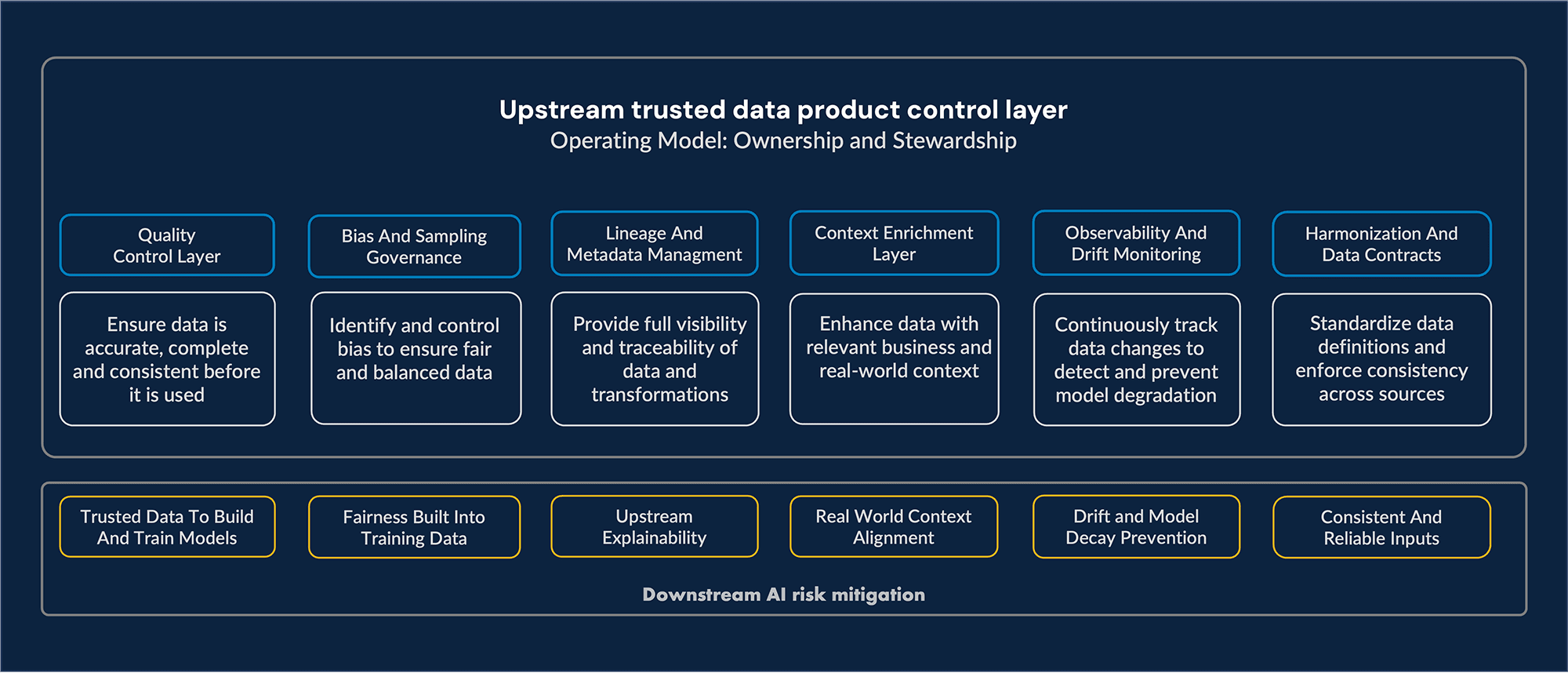

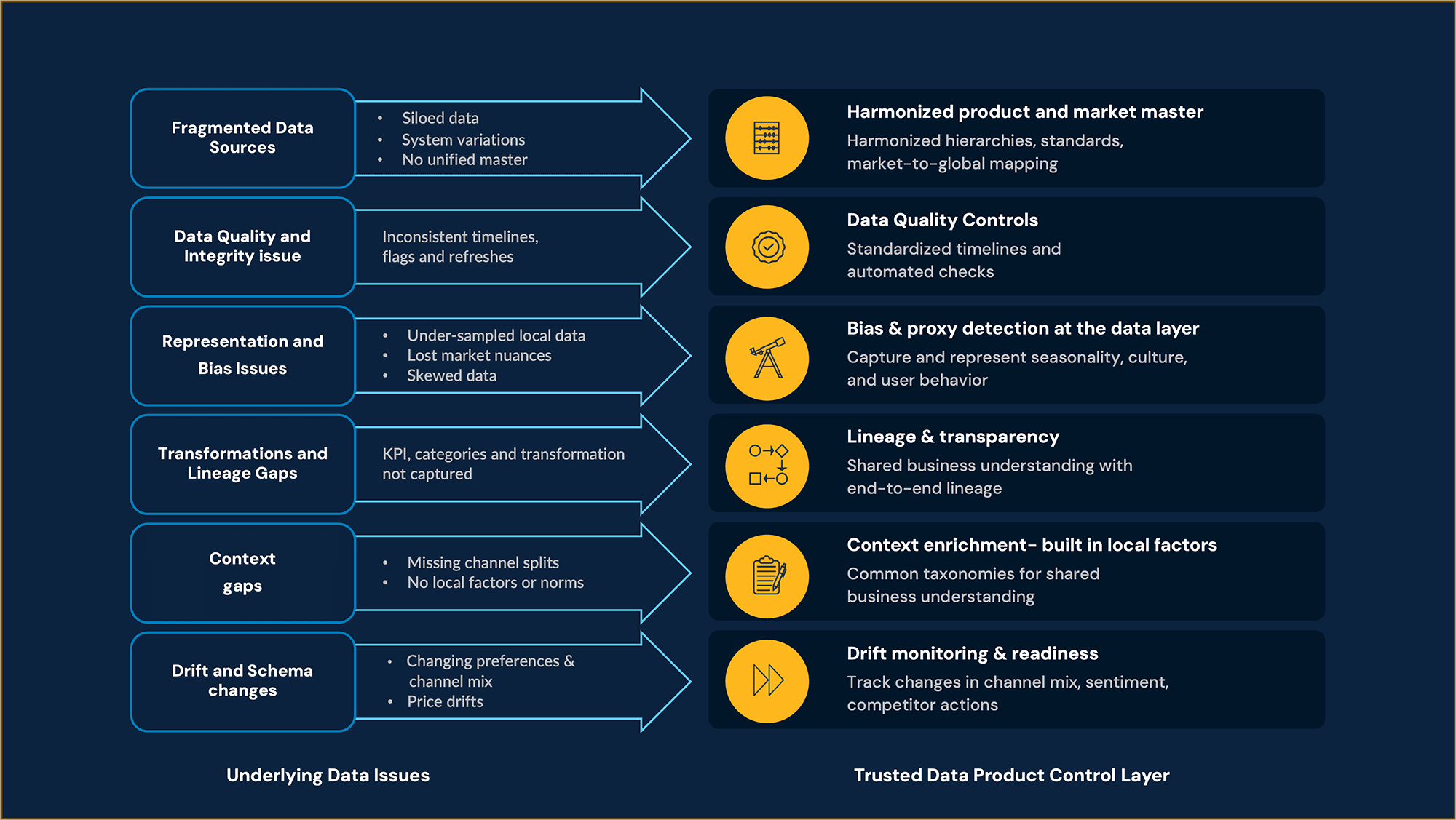

Rather than firefighting outcomes, data layers should be treated as the control point, and a Trusted Data Product acts as a safe contractual layer between data, AI models, and decision makers. By embedding quality, representation, lineage, context, and drift controls directly into a Trusted Data Product (TDP), we intercept issues before they propagate, hence, transforming data from a vulnerability into a strategic control point. We visualize through the table/diagram how a Trusted Data Product (TDP) places targeted controls to intercept data issues early.

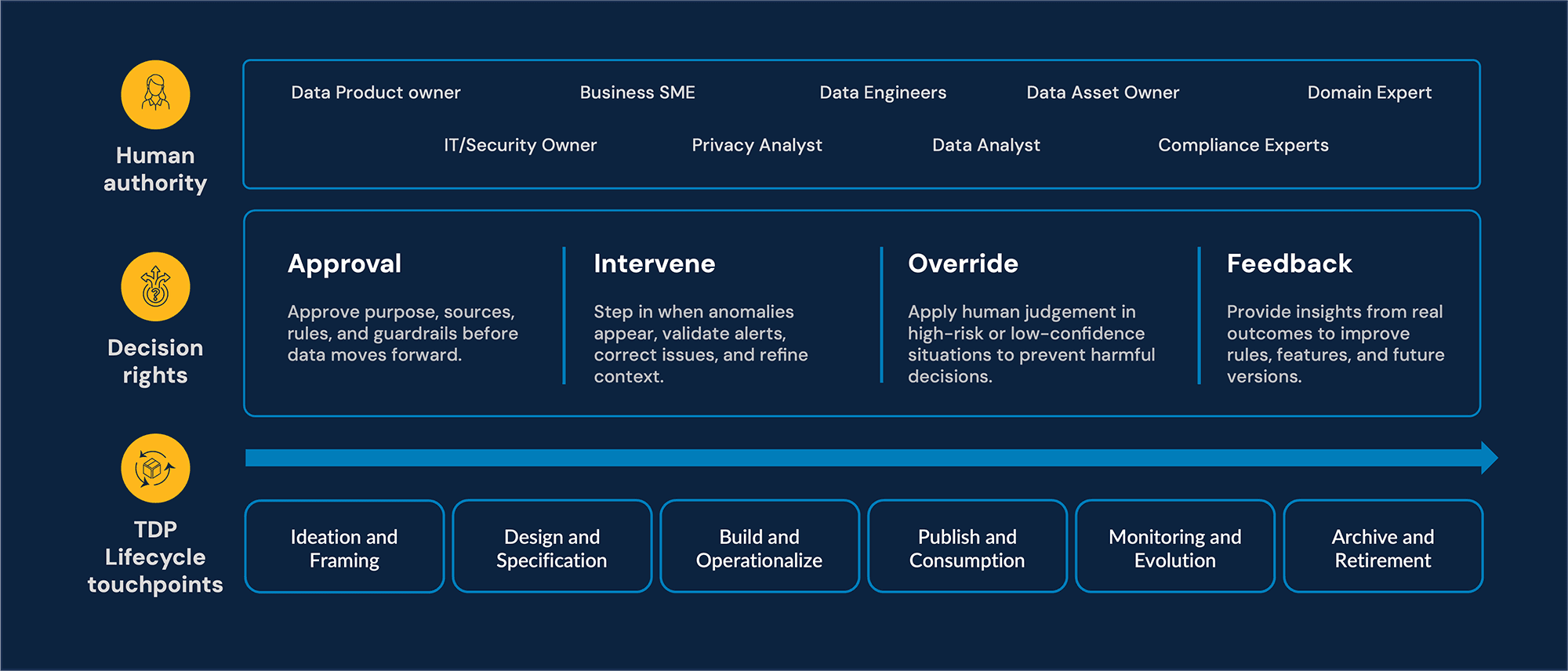

Shift left only works when accountability is owned. This is where governance becomes a live practice: data stewards keep quality and semantics honest; domain experts inject and evolve business context; risk and compliance set guardrails; and operations teams close the loop with real-world feedback. In other words, Trusted Data Products provide the control plane, but human accountability is the engine that keeps it credible and audit-ready.

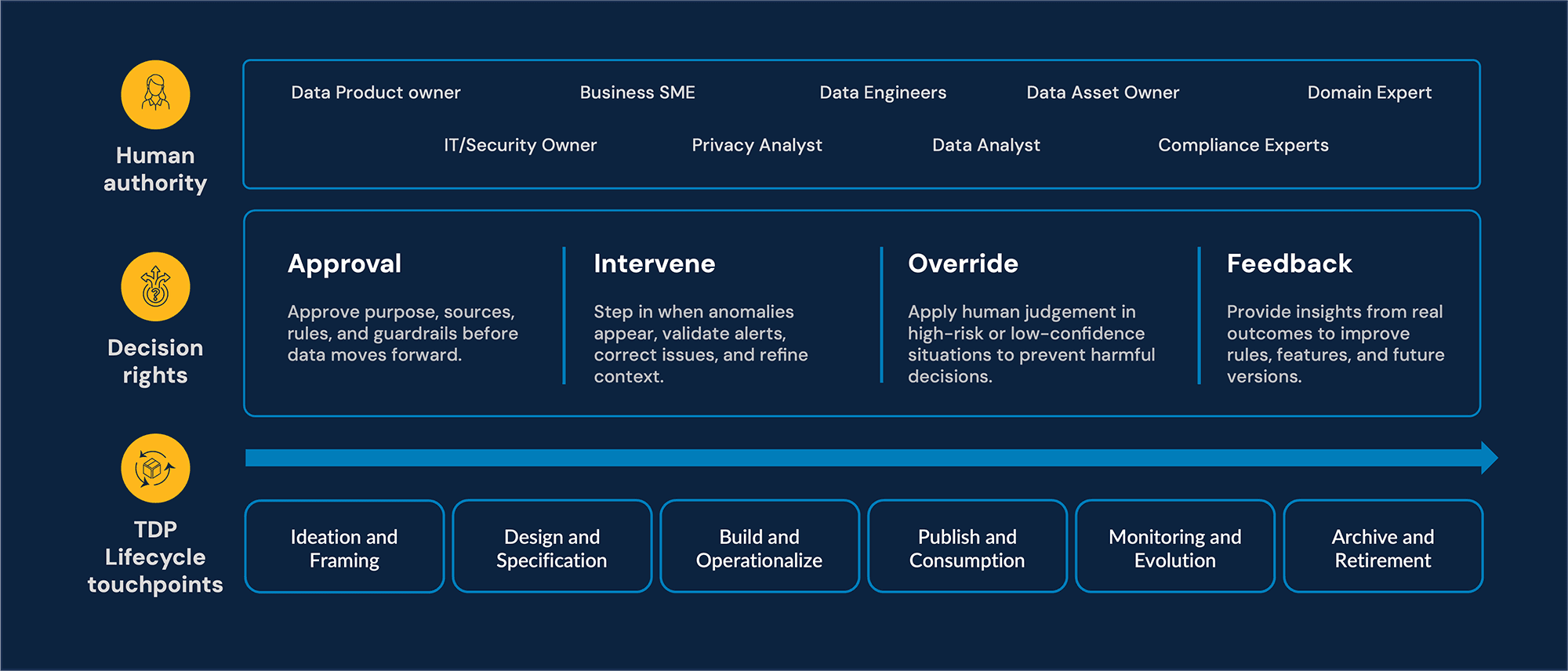

The human backbone behind trusted data products

A Trusted Data Product (TDP) should be treated as a managed asset with a lifecycle, and every stage should have a human checkpoint that keeps the data compliant and usable for AI. In high impact scenarios, humans must retain the ability to intervene, question, override, and sign off on decisions. Well-designed data products can surface uncertainty, expose assumptions, and embed human judgment at points where decisions are made. We outline the human backbone for Trusted data products: the roles, touchpoints, and decision rights that safeguard judgment, ethics, and contextual nuance.

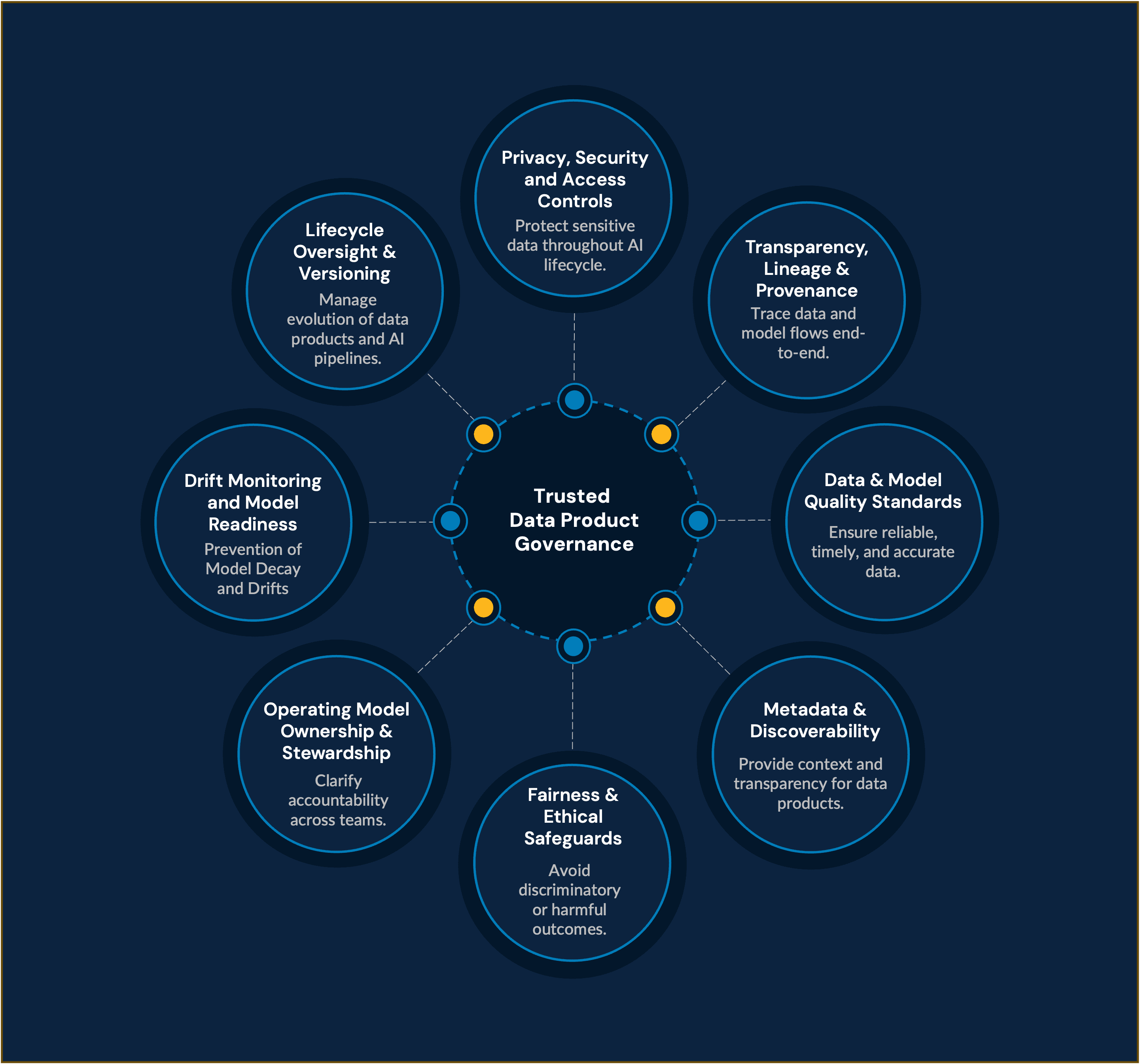

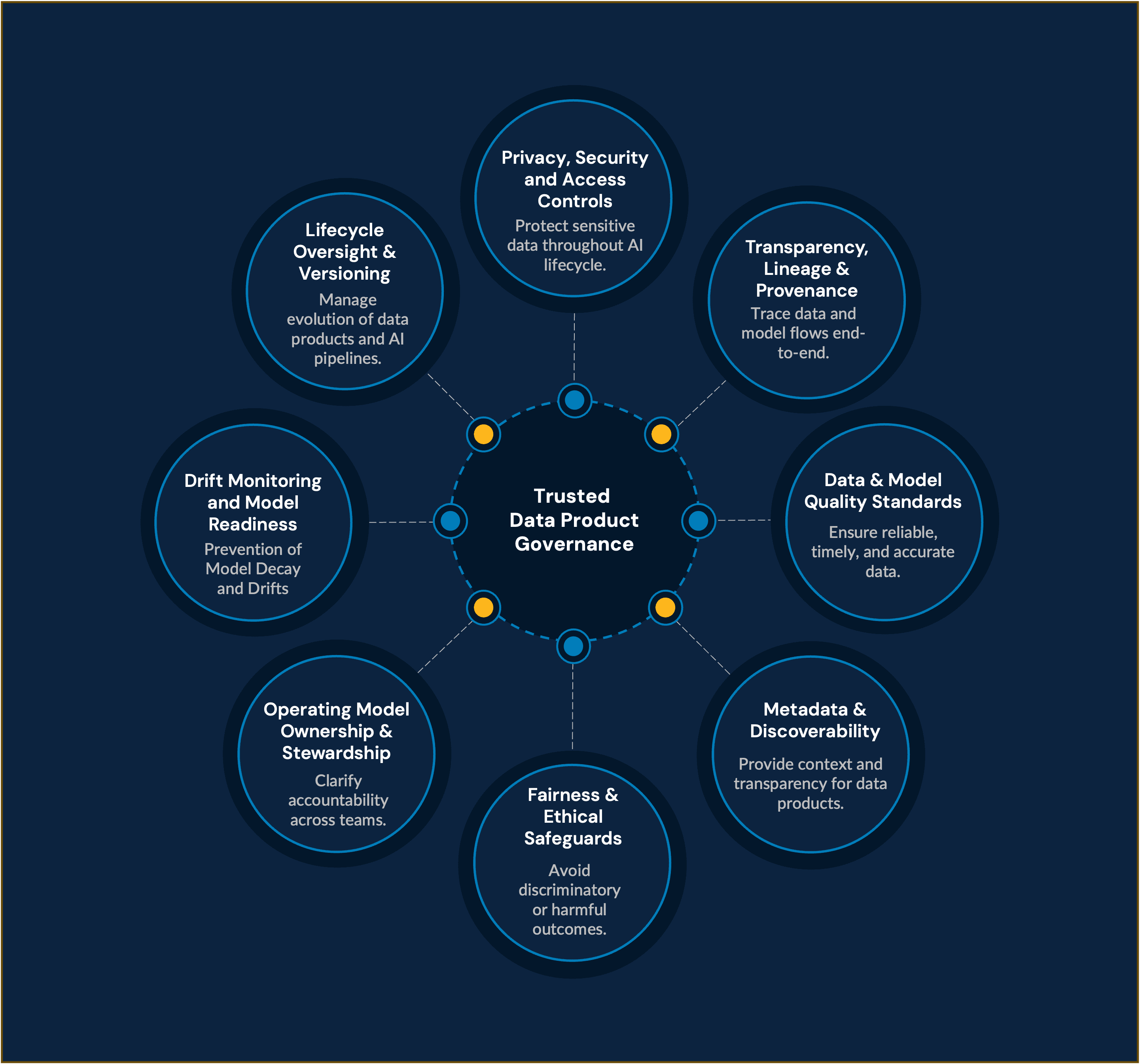

Governance principles for trusted data products

While the human backbone defines who makes the decisions, governance provides consistency, transparency, and accountability to ensure every Trusted Data Product is built and managed to the same standard.

The governance principles below form a stable framework that helps grow AI capabilities with confidence rather than risk. They create conditions under which human decisions can be trusted, repeatable, and aligned, no matter how many teams or systems rely on the data.

By applying these principles consistently, the TDP becomes a disciplined foundation: data issues are caught earlier, quality and context are preserved, and AI systems receive inputs they can genuinely rely on.

Governance for trusted data product | Governance enablers and offerings |

|---|---|

Governance for trust |

|

Governance for transparency |

|

Governance for reliability |

|

Governance for accountability |

|

The result is a straight line from governance to better data, from better data to more resilient TDPs, and from resilient TDPs to AI outcomes that are fairer, clearer, and far more predictable with built-in human authority.

Use case: Global/Local marketing decisions

CPG organization wants to improve marketing decisions (campaign planning, media mix, pricing, assortment, innovation insights) using data sourced from multiple markets, categories, and customer touchpoints. Markets operate independently, use different systems, and follow inconsistent definitions. Leadership wants a single, trusted view to support global strategy without losing local nuance.

Common failures:

Marketing models and optimization engines face predictable AI failures and risks:

Inconsistent definitions break cross‑market comparability

Bias toward larger markets where data is richer, underrepresenting emerging regions

Loss of context when global models ignore local media behavior, pricing dynamics, or cultural seasonality

Drift when consumer sentiment, competitor tactics, or distribution conditions change

Opaque insights when data lineage is unclear, making it hard for marketers to trust recommendations

Underlying data issues and how shift left with trusted data product resolve it

AI decision with trusted data product control

With a trusted data product foundation, organization proceeds with clear global alignment, sharper local execution, and stronger marketing ROI.

A single, trusted data layer for global + local decisions

Models that reflect local nuance without losing global comparability

Faster, automated insight generation

Transparent, explainable KPIs trusted by country teams

Reduction in manual reconciliation and inconsistent reporting

Better AI recommendations for spend allocation, pricing, and innovation bets

A future with trusted AI

A strong data product turns complexity into clarity: it gives teams confidence that what flows into their models is accurate, contextual, and governed. As we look ahead, the organizations that succeed with AI won’t simply have better algorithms; they’ll have better data foundations. AI only becomes dependable when the data behind it is trustworthy and when people are empowered to govern that data with clarity and intent. The path forward is clear: build intelligence on a foundation that is transparent, well governed, and shaped by people who understand business, the risks, and the stakes.

Not just strength, AI’s vulnerability also begins with data

Enterprises are rapidly embedding AI into operational decisions - loan approvals, claims triage, diagnosis assistance, collections of treatment, and more - yet many still rely on brittle pipelines and opaque models with limited oversight. But behind these systems lies a fragile truth: AI fails more often because of bad data than bad algorithms. AI systems increasingly influence outcomes that carry financial, ethical, and societal consequences.

These failure modes are the predictable consequences of upstream data weaknesses. The implication for governance is clear: if we want reliable, fair, and explainable AI at scale, we must move the control point closer to the source.

Shift-left to mitigate hidden AI flaws/failures

Shift Left is the commitment to move risk controls upstream because most AI risks originate long before a model is even trained. When biased samples, missing context, opaque transformations, or silent drift slip into the pipeline, the model will amplify them on a scale.

Rather than firefighting outcomes, data layers should be treated as the control point, and a Trusted Data Product acts as a safe contractual layer between data, AI models, and decision makers. By embedding quality, representation, lineage, context, and drift controls directly into a Trusted Data Product (TDP), we intercept issues before they propagate, hence, transforming data from a vulnerability into a strategic control point. We visualize through the table/diagram how a Trusted Data Product (TDP) places targeted controls to intercept data issues early.

Shift left only works when accountability is owned. This is where governance becomes a live practice: data stewards keep quality and semantics honest; domain experts inject and evolve business context; risk and compliance set guardrails; and operations teams close the loop with real-world feedback. In other words, Trusted Data Products provide the control plane, but human accountability is the engine that keeps it credible and audit-ready.

The human backbone behind trusted data products

A Trusted Data Product (TDP) should be treated as a managed asset with a lifecycle, and every stage should have a human checkpoint that keeps the data compliant and usable for AI. In high impact scenarios, humans must retain the ability to intervene, question, override, and sign off on decisions. Well-designed data products can surface uncertainty, expose assumptions, and embed human judgment at points where decisions are made. We outline the human backbone for Trusted data products: the roles, touchpoints, and decision rights that safeguard judgment, ethics, and contextual nuance.

Governance principles for trusted data products

While the human backbone defines who makes the decisions, governance provides consistency, transparency, and accountability to ensure every Trusted Data Product is built and managed to the same standard.

The governance principles below form a stable framework that helps grow AI capabilities with confidence rather than risk. They create conditions under which human decisions can be trusted, repeatable, and aligned, no matter how many teams or systems rely on the data.

By applying these principles consistently, the TDP becomes a disciplined foundation: data issues are caught earlier, quality and context are preserved, and AI systems receive inputs they can genuinely rely on.

Governance for trusted data product | Governance enablers and offerings |

|---|---|

Governance for trust |

|

Governance for transparency |

|

Governance for reliability |

|

Governance for accountability |

|

The result is a straight line from governance to better data, from better data to more resilient TDPs, and from resilient TDPs to AI outcomes that are fairer, clearer, and far more predictable with built-in human authority.

Use case: Global/Local marketing decisions

CPG organization wants to improve marketing decisions (campaign planning, media mix, pricing, assortment, innovation insights) using data sourced from multiple markets, categories, and customer touchpoints. Markets operate independently, use different systems, and follow inconsistent definitions. Leadership wants a single, trusted view to support global strategy without losing local nuance.

Common failures:

Marketing models and optimization engines face predictable AI failures and risks:

Inconsistent definitions break cross‑market comparability

Bias toward larger markets where data is richer, underrepresenting emerging regions

Loss of context when global models ignore local media behavior, pricing dynamics, or cultural seasonality

Drift when consumer sentiment, competitor tactics, or distribution conditions change

Opaque insights when data lineage is unclear, making it hard for marketers to trust recommendations

Underlying data issues and how shift left with trusted data product resolve it

AI decision with trusted data product control

With a trusted data product foundation, organization proceeds with clear global alignment, sharper local execution, and stronger marketing ROI.

A single, trusted data layer for global + local decisions

Models that reflect local nuance without losing global comparability

Faster, automated insight generation

Transparent, explainable KPIs trusted by country teams

Reduction in manual reconciliation and inconsistent reporting

Better AI recommendations for spend allocation, pricing, and innovation bets

A future with trusted AI

A strong data product turns complexity into clarity: it gives teams confidence that what flows into their models is accurate, contextual, and governed. As we look ahead, the organizations that succeed with AI won’t simply have better algorithms; they’ll have better data foundations. AI only becomes dependable when the data behind it is trustworthy and when people are empowered to govern that data with clarity and intent. The path forward is clear: build intelligence on a foundation that is transparent, well governed, and shaped by people who understand business, the risks, and the stakes.

References

Recognition and achievements

Select Fractal accolades

Named leader

Customer analytics service provider Q2 2025

Representative vendor

Customer analytics service provider Q1 2021

Great Place to Work

9th year running. Certifications received for India, USA, UK, and UAE

Recognition and achievements

Select Fractal accolades

Named leader

Customer analytics service provider Q2 2025

Representative vendor

Customer analytics service provider Q1 2021

Great Place to Work

9th year running. Certifications received for India, USA, UK, and UAE

Registered Office:

Level 7, Commerz II, International Business Park, Oberoi Garden City,

Off W. E. Highway Goregaon (E), Mumbai - 400063, Maharashtra, India.

Phone: +91 22 6850 5800

Email: investorrelations@fractal.ai

CIN : L72400MH2000PLC125369

GST Number (Maharashtra) : 27AAACF4502D1Z8

Registered Office:

Level 7, Commerz II, International Business Park,

Oberoi Garden City, Off W. E. Highway Goregaon (E),

Mumbai - 400063, Maharashtra, India.

Phone: +91 22 6850 5800

Email: investorrelations@fractal.ai

CIN : L72400MH2000PLC125369

GST Number (Maharashtra) : 27AAACF4502D1Z8